How to Scrape DefiLlama Data for DeFi Research and Blockchain Analytics

If you want to scrape DefiLlama data for DeFi research, blockchain analytics, or competitive intelligence, this guide walks you through the full process. You will learn what data you can extract, how to configure the scraper, and how to turn DefiLlama's comprehensive DeFi metrics into structured datasets ready for analysis.

Why Scrape DefiLlama Data?

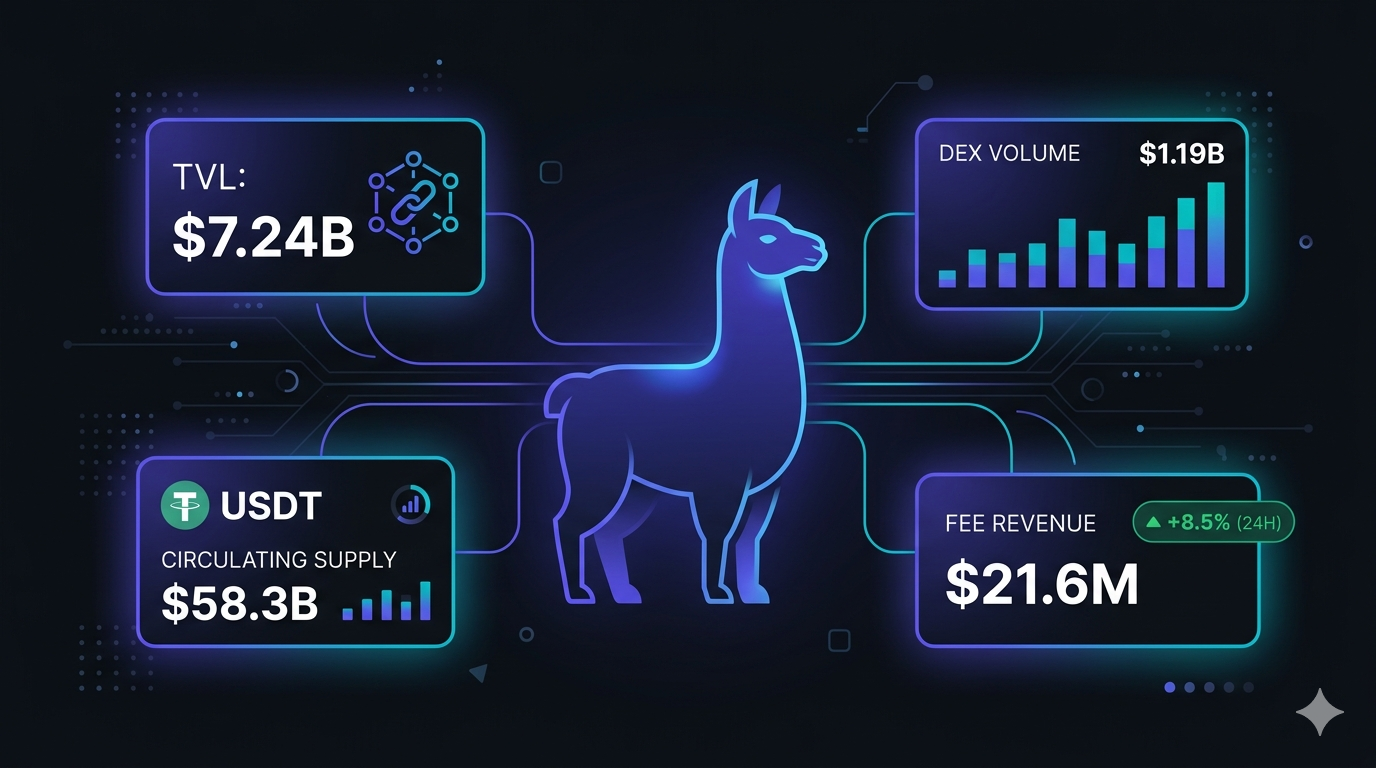

DefiLlama is the industry standard for DeFi analytics. It aggregates Total Value Locked (TVL), trading volumes, fee data, and stablecoin metrics across virtually every major blockchain and DeFi protocol — and it does so without requiring you to hold any assets or connect a wallet.

The breadth and depth of DefiLlama's data makes it uniquely valuable for a wide range of use cases:

- Market research — compare TVL, fees, and volume across chains and protocols to understand where DeFi activity is concentrated

- Competitive intelligence — track how a protocol's TVL and revenue evolves relative to competitors in the same category

- Investment research — evaluate protocol fundamentals using TVL-to-revenue ratios, fee trends, and multi-chain expansion signals

- Stablecoin monitoring — track circulating supply, peg stability, and cross-chain distribution for major stablecoins

- Index construction — build custom DeFi indices weighted by TVL, fees, or volume using DefiLlama's structured data

- Academic research — study DeFi market dynamics, protocol adoption curves, and the relationship between on-chain activity and market metrics

The challenge is that DefiLlama exposes data across many different API endpoints with varying schemas. Pulling together a unified view of chains, protocols, DEXes, fees, and stablecoins requires significant engineering effort if you build from scratch. The DefiLlama Scraper handles that complexity for you.

What Data You Can Extract from DefiLlama

The DefiLlama Scraper extracts five categories of structured data, all unified into a single dataset with a type field for easy filtering.

Chain-Level Data

Always scraped. Includes TVL, historical TVL changes, and aggregated fee data per blockchain.

| Field | Description | Example |

|---|---|---|

| name | Blockchain name | Ethereum |

| tvl | Current Total Value Locked (USD) | 62345678901 |

| tvlChange1d / 7d / 30d | TVL percentage change over time windows | 1.23, -2.45, 5.67 |

| fees24h / 7d / 30d | Aggregated fee generation (USD) | 4567890, 31234567, 128765432 |

| feesChange7dover7d | Week-over-week fee change percentage | 12.34 |

| tokenSymbol | Native token symbol | ETH |

| chainId | EVM chain ID (where applicable) | 1 |

Protocol Data

Enabled via scrapeProtocols. TVL, category, multi-chain deployment, and social data for ~4,000 DeFi protocols.

| Field | Description | Example |

|---|---|---|

| name | Protocol name | Lido |

| category | DeFi category | Liquid Staking |

| tvl | Protocol TVL (USD) | 14567890123 |

| tvlChange1h / 1d / 7d | TVL change percentages | 0.05, 1.12, -0.87 |

| chains | List of chains where protocol is deployed | ["Ethereum", "Solana", "Polygon"] |

| symbol | Protocol token symbol | LDO |

| Twitter handle | LidoFinance |

DEX Volume Data

Enabled via scrapeDexes. Per-protocol trading volumes across 24h, 7d, 30d, and all-time windows.

| Field | Description | Example |

|---|---|---|

| name | DEX protocol name | Uniswap |

| volume24h / 7d / 30d / 1y | Trading volume in USD per window | 1234567890, 8765432100 |

| volumeAllTime | Cumulative all-time trading volume | 1890567234000 |

| volumeChange1d / 7d / 1m | Volume change percentages | 5.43, -2.10, 12.87 |

| chains | Chains where DEX is deployed | ["Ethereum", "Arbitrum", "Base"] |

Fee and Revenue Data

Enabled via scrapeFees. Per-protocol fee generation and revenue across multiple time windows.

| Field | Description | Example |

|---|---|---|

| name | Protocol name | Aave |

| fees24h / 7d / 30d / 1y | Fee generation in USD | 987654, 6543210, 27654321 |

| feesAllTime | Cumulative all-time fees | 890123456 |

| feesChange1d / 7d / 1m | Fee change percentages | 2.34, -1.56, 8.90 |

| category | DeFi category | Lending |

Stablecoin Data

Enabled via scrapeStablecoins. Circulating supply, peg type, peg mechanism, price, and cross-chain distribution.

| Field | Description | Example |

|---|---|---|

| name / symbol | Stablecoin name and ticker | Tether / USDT |

| pegType | What the stablecoin is pegged to | peggedUSD |

| pegMechanism | How the peg is maintained | fiat-backed |

| price | Current price (should be ~1.00) | 0.9998 |

| circulating | Current circulating supply (USD) | 118234567890 |

| circulatingPrevDay / Week / Month | Historical supply snapshots | 118200000000 |

| chains | Chains where the stablecoin is deployed | ["Ethereum", "Tron", "BSC"] |

Common Use Cases for DefiLlama Data

DeFi Market Research

DefiLlama is the go-to source for understanding where capital is deployed across the DeFi ecosystem. By scraping chain and protocol TVL data, you can build comprehensive market maps: which chains are growing fastest, which protocol categories are gaining share, and how TVL distribution is shifting over time.

Compare TVL-to-fees ratios across protocols to evaluate capital efficiency. Identify protocols that generate disproportionate fee revenue relative to their TVL — a sign of high utilization and strong product-market fit.

Protocol Competitive Analysis

The category field on protocol records lets you group competitors and compare them directly. Filter all Lending protocols and rank by TVL, fee generation, and chain coverage. Track how Aave, Compound, and Morpho compare across multiple dimensions simultaneously.

For teams building DeFi products or evaluating investments, this kind of structured competitive view is difficult to assemble manually but trivial with the scraper.

Chain Ecosystem Benchmarking

Chain-level TVL data is the primary metric used to compare blockchain ecosystems. Scrape the top chains and track TVL changes over 1d, 7d, and 30d windows to identify momentum — which chains are gaining liquidity and which are losing it.

Combine with fee data to calculate revenue-per-TVL ratios for each chain. Ethereum generating $4.5M/day in fees on $62B TVL tells a different story than a chain with similar TVL but minimal fee generation.

Stablecoin Market Monitoring

Stablecoin circulating supply is one of the most reliable on-chain indicators of market liquidity. Rising stablecoin supply signals dry powder entering the market; falling supply suggests capital is leaving. Tracking USDT, USDC, DAI, and others over time gives you a real-time liquidity barometer.

The price field lets you monitor peg stability. Significant deviations from $1.00 are early warning signals of depeg events — tracking this across all stablecoins simultaneously provides a broad safety monitor for the ecosystem.

DEX Volume Trend Analysis

DEX volume data reveals where on-chain trading activity is concentrated. Track Uniswap, Curve, Raydium, and others across 24h, 7d, and 30d windows. Identify volume migration between chains — when activity shifts from Ethereum to Base or Arbitrum, DEX volume data is where you see it first.

Volume change metrics (day-over-day, week-over-week, month-over-month) let you surface emerging trends early: which DEXes are gaining volume momentum and which are losing ground.

Index and Portfolio Construction

DefiLlama data provides the raw material for building quantitative DeFi indices. Construct TVL-weighted indices across all protocols in a given category, or build fee-weighted indices that overweight the most productive protocols. Schedule regular scraper runs to keep index weights updated as TVL and fee distributions shift.

Challenges of Collecting DefiLlama Data Manually

Even with a public API, assembling comprehensive DefiLlama data involves real complexity:

- Multiple endpoints — chains, protocols, DEXes, fees, and stablecoins each live on separate API endpoints with different response schemas

- Schema normalization — each endpoint returns differently structured data. Building a unified view requires custom mapping code

- Data volume — the protocols endpoint returns ~4,000 records. Working with this at scale requires pagination handling and efficient storage

- Rate limit management — hitting multiple endpoints in sequence requires thoughtful request pacing

- Ongoing maintenance — DefiLlama updates its API and data schema periodically, breaking integrations that depend on specific field names or structures

- No unified type field — the raw API returns data siloed by endpoint. Creating a single queryable dataset requires merging and tagging records manually

The DefiLlama Scraper handles all of this, delivering a single clean dataset with a type field (chain, protocol, dex, fee, stablecoin) that you can filter immediately in any downstream tool.

Step-by-Step: How to Scrape DefiLlama

Here is how to scrape DefiLlama data using the DefiLlama Scraper on Apify.

Step 1 — Decide Which Data Categories You Need

The scraper gives you granular control over which data categories to include. Chain-level TVL and fee data is always collected. Optional categories are toggled with boolean inputs:

- scrapeProtocols — TVL, category, and deployment info for ~4,000 protocols

- scrapeDexes — trading volumes across multiple time windows for all tracked DEXes

- scrapeFees — fee and revenue data per protocol

- scrapeStablecoins — circulating supply, peg details, and price for all tracked stablecoins

Enable only what you need to keep run times short and datasets focused.

Step 2 — Configure the Scraper Input

Head to the DefiLlama Scraper on Apify and configure your run:

- Set maxChains to control how many chains (by TVL) to include — default is 100, range is 1–1000

- Toggle scrapeProtocols, scrapeDexes, scrapeFees, and/or scrapeStablecoins based on which categories you want

- Click Start to begin the extraction

No API key is required. The scraper queries DefiLlama's public API endpoints directly.

Step 3 — Run the Scraper

Once started, the scraper will:

- Fetch chain TVL and fee data for your configured chain limit

- Optionally fetch protocol, DEX, fee, and stablecoin data based on your toggles

- Unify all results into a single dataset with a

typefield for easy filtering - Deliver structured JSON output ready for analysis

Runs complete quickly. Chain-only runs take seconds. Full runs including all five categories complete in under a minute for most configurations.

Step 4 — Export Structured Results

Once the scraper finishes, export your results in your preferred format:

- JSON — ideal for developers integrating DefiLlama data into analytics pipelines, dashboards, or quantitative models

- CSV — perfect for Excel, Google Sheets, or database imports

- API — access results programmatically via the Apify API for automated workflows

Each record includes the full set of fields for its type, unified under the type discriminator for easy downstream filtering.

Ready to try it? Run the DefiLlama Scraper on Apify and get your first dataset in minutes.

Example Output (Real Data Preview)

Here is what the actual output looks like from the DefiLlama Scraper. All records share the same dataset, differentiated by the type field:

[

{

"type": "chain",

"name": "Ethereum",

"tvl": 62345678901,

"tokenSymbol": "ETH",

"chainId": 1,

"tvlChange1d": 1.23,

"tvlChange7d": -2.45,

"tvlChange30d": 5.67,

"fees24h": 4567890,

"fees7d": 31234567,

"fees30d": 128765432

},

{

"type": "protocol",

"name": "Lido",

"slug": "lido",

"symbol": "LDO",

"category": "Liquid Staking",

"chains": ["Ethereum", "Solana", "Polygon"],

"tvl": 14567890123,

"tvlChange1d": 1.12,

"tvlChange7d": -0.87,

"twitter": "LidoFinance"

},

{

"type": "dex",

"name": "Uniswap",

"slug": "uniswap",

"chains": ["Ethereum", "Arbitrum", "Polygon", "Optimism", "Base"],

"volume24h": 1234567890,

"volume7d": 8765432100,

"volumeChange1d": 5.43,

"volumeChange7d": -2.10

},

{

"type": "fee",

"name": "Aave",

"slug": "aave",

"category": "Lending",

"fees24h": 987654,

"fees7d": 6543210,

"feesChange7d": -1.56

},

{

"type": "stablecoin",

"name": "Tether",

"symbol": "USDT",

"pegType": "peggedUSD",

"pegMechanism": "fiat-backed",

"price": 0.9998,

"circulating": 118234567890,

"chains": ["Ethereum", "Tron", "BSC", "Solana", "Avalanche"]

}

]

Key things to notice:

- Unified dataset — all five data types land in the same dataset. Filter with

type === "chain"ortype === "protocol"to segment exactly what you need - TVL change windows — chains and protocols include 1d, 7d, and 30d TVL changes, giving you momentum signals built in

- Multi-chain arrays — protocols, DEXes, and stablecoins include a

chainsarray showing cross-chain deployment, critical for understanding capital distribution - Fee generation fields — both chain-level and protocol-level fee data is included, enabling revenue analysis at every level of the stack

- Stablecoin peg details —

pegTypeandpegMechanismlet you segment algorithmic versus fiat-backed stablecoins immediately

This structured output is ready to feed directly into analytics tools, research workflows, or quantitative models.

Try the DefiLlama Scraper now — no coding required.

Automating DefiLlama Data Collection

For ongoing research, market monitoring, or index maintenance, you need continuous data collection, not one-off runs. The Apify platform supports full automation:

Scheduled Runs

Set up recurring scrapes on any schedule — hourly, daily, or weekly. The scraper runs automatically and appends results to a persistent dataset you can access at any time. For TVL trend analysis, daily runs build up a clean time series. For stablecoin peg monitoring, more frequent runs help you catch depeg events early.

API Integration

Use the Apify API to trigger scraper runs programmatically and retrieve results. This lets you integrate DefiLlama data into your existing workflows:

- Feed fresh TVL and fee data into dashboards or analytics platforms automatically

- Trigger alerts when chain TVL drops more than a threshold percentage

- Build tools that compare protocol metrics and surface anomalies

- Connect to data pipelines, databases, or BI tools via API or CSV exports

Node.js Example

For a complete working example showing how to call this scraper from Node.js, see the GitHub repository.

Webhooks

Configure webhooks to get notified when a scraper run completes. This enables event-driven architectures where fresh DefiLlama data triggers downstream processing — metric updates, alert evaluation, or index rebalancing — immediately rather than on a polling schedule.

Analyzing DefiLlama Data Effectively

With a unified DefiLlama dataset in hand, here are practical analysis patterns to apply immediately.

TVL Momentum Scoring

Compute a momentum score per chain or protocol by combining TVL change windows:

- Short-term momentum =

tvlChange1dweighted at 50% - Medium-term momentum =

tvlChange7dweighted at 30% - Long-term momentum =

tvlChange30dweighted at 20%

Rank chains or protocols by this composite score to surface what is gaining traction in the current market environment.

Fee Efficiency Analysis

For protocols with both TVL and fee data, calculate a fee yield metric:

- Fee yield =

fees30d/tvl× 12 (annualized)

High fee yield relative to TVL indicates efficient capital utilization. Comparing fee yield across protocols in the same category reveals which ones are capturing the most value from their locked capital.

Stablecoin Supply Flow Analysis

Track stablecoin supply changes over time by comparing circulating, circulatingPrevDay, circulatingPrevWeek, and circulatingPrevMonth. Rising supply across major stablecoins signals liquidity inflows into the ecosystem. Cross-reference with chain TVL changes to identify where that liquidity is being deployed.

Category Concentration Analysis

Group protocol records by category and sum TVL per category. Track how the TVL distribution across categories — Liquid Staking, DEXes, Lending, Bridges, Yield — evolves over time. Category rotation is a reliable signal for understanding which DeFi narratives are driving capital allocation.

Does DefiLlama Have an Official API?

DefiLlama does offer a public API, but working with it directly has real trade-offs:

Multiple Endpoints, Multiple Schemas

Chain data, protocol data, DEX volumes, fees, and stablecoins each live on separate endpoints with different response structures. To get a unified view, you need to hit five different endpoints, map their schemas, and merge the results yourself.

Schema Maintenance

DefiLlama's API schema has evolved over time. Field names change, new fields are added, and response structures are occasionally restructured. Maintaining a custom integration that tracks these changes requires ongoing engineering investment.

The Practical Alternative

For teams that need a clean, unified DeFi dataset without the engineering overhead, the DefiLlama Scraper delivers all five data categories in a single normalized dataset — ready to query immediately with no custom schema mapping required.

Why Use a Pre-Built DefiLlama Scraper

Building your own DefiLlama data pipeline has more hidden complexity than it first appears:

- Endpoint proliferation — five separate API endpoints, each requiring its own request logic and response handling

- Schema normalization — each endpoint returns differently structured data that must be mapped to a unified format for cross-category analysis

- Pagination and volume — the protocols endpoint returns ~4,000 records. Handling this efficiently requires proper pagination and data management

- Field discovery — not all available fields are documented. Discovering and consistently extracting the full set requires careful inspection of real API responses

- Rate limit handling — hitting multiple endpoints in sequence without triggering limits requires request pacing logic

- Ongoing maintenance — API schema changes require code updates to avoid silent data loss or extraction failures

A maintained, pre-built scraper eliminates this overhead. You get the data, not the infrastructure.

Try the DefiLlama Scraper

The DefiLlama Scraper extracts structured blockchain and DeFi data from DefiLlama — chain TVL, protocol metrics, DEX volumes, fee and revenue data, and stablecoin analytics.

What you get:

- Chain-level TVL, fee data, and historical change metrics — always included

- Protocol TVL, category, and multi-chain deployment data for ~4,000 protocols

- DEX trading volumes across 24h, 7d, 30d, and all-time windows

- Per-protocol fee and revenue data across multiple time windows

- Stablecoin circulating supply, peg type, price, and chain distribution

- Unified JSON or CSV output with a

typefield for easy filtering - Scheduled runs for continuous market monitoring and time-series building

- API access for integration into research, analytics, and investment workflows

- No API key or coding required

Start scraping DefiLlama now — your first run takes less than 5 minutes to set up.

If you are building a comprehensive crypto analytics stack, combine DefiLlama data with CryptoPanic sentiment data and on-chain price feeds to build multi-signal models that span fundamentals, sentiment, and market dynamics.

Legal and Ethical Considerations

Web scraping occupies a well-established legal space, but responsible practice matters:

- Public data only — the DefiLlama Scraper accesses publicly available DeFi metrics that anyone can view by visiting DefiLlama. No authentication or account is required

- Open API endpoints — DefiLlama explicitly provides public API endpoints, signaling that programmatic data access is intended and welcomed

- Responsible use — use the data for legitimate purposes such as market research, competitive analysis, and investment research

- Respect rate limits — the scraper is designed to make requests at a reasonable pace to avoid overloading DefiLlama's infrastructure

- Not financial advice — DeFi metrics from DefiLlama are research inputs, not financial advice. Always conduct thorough due diligence before making investment decisions

Frequently Asked Questions

Is scraping DefiLlama legal?

DefiLlama provides publicly accessible DeFi data without requiring authentication. Scraping this public data is generally legal. The platform also exposes open API endpoints, which means the data is intended to be accessible. Always use the data responsibly and avoid overloading DefiLlama's servers with excessive requests.

Does DefiLlama have an official API?

Yes, DefiLlama has a public API, but it requires you to handle endpoint discovery, data normalization, rate limits, and schema changes yourself. The DefiLlama Scraper wraps the API endpoints into a single structured dataset with a unified type field, making it far easier to work with across all five data categories.

What data can be extracted from DefiLlama?

You can extract chain-level TVL and fee data, protocol-level TVL and category info for ~4,000 DeFi protocols, DEX trading volumes across multiple time windows, per-protocol fee and revenue data, and stablecoin circulating supply and peg details.

Can I get historical TVL data from DefiLlama?

Yes. The chain-level data includes TVL change percentages over 1-day, 7-day, and 30-day windows, giving you a rolling view of TVL momentum. For longer historical series, you can schedule recurring scraper runs to build up a time-series dataset over time.

Can I export DefiLlama data to CSV or Excel?

Yes. The DefiLlama Scraper supports exporting results as JSON, CSV, or via the Apify API. CSV exports can be opened directly in Excel or Google Sheets for analysis.

How many DeFi protocols does DefiLlama track?

DefiLlama tracks approximately 4,000 DeFi protocols across dozens of blockchains. The scraper can retrieve all of them in a single run when the scrapeProtocols option is enabled.

About the Author

This guide was written by Piotr, a software engineer with hands-on experience building and maintaining web scrapers at scale. He develops and maintains a suite of data extraction tools on the Apify platform, helping businesses automate their data collection workflows.

Need help with your scraping project?

Book a free discovery call and let's scope your project together.

Book a Call